Just as the WCF plumbing enables clients to call server operations asynchronously, without the server needing to know anything about it, WCF also allows service operations to be defined asynchronously. So an operation like:

[OperationContract]

string DoWork(int value);

…might instead be expressed in the service contract as:

[OperationContract(AsyncPattern = true)]

IAsyncResult BeginDoWork(int value, AsyncCallback callback, object state);

string EndDoWork(IAsyncResult result);

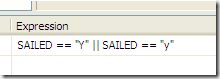

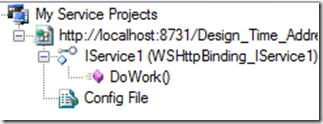

Note that the two forms are equivalent, and indistinguishable in the WCF metadata: they both expose an operation called DoWork[1]:

... but in the async version you are allowing the original WCF dispatcher thread to be returned to the pool (and hence service further requests) while you carry on processing in the background. When your operation completes, you call the WCF-supplied callback, which then completes the request by sending the response to the client down the (still open) channel. You have decoupled the request lifetime from that of the original WCF dispatcher thread.

From a scalability perspective this is huge, because you can minimise the time WCF threads are tied up waiting for your operation to complete. Threads are an expensive system resource, and not something you want lying around blocked when they could be doing other useful work.

Note this only makes any sense if the operation you’re implementing really is asynchronous in nature, otherwise the additional expense of all this thread-switching outweighs the benefits. Wenlong Dong, one of the WCF team, has written a good blog article about this, but basically it all comes down to I/O operations, which are the ones that already have Begin/End overloads implemented.

Since Windows NT, most I/O operations (file handles, sockets etc…) have been implemented using I/O Completion Ports (IOCP). This is a complex subject, and not one I will pretend to really understand, but basically Windows already handles I/O operations asynchronously, as IOCPs are essentially work queues. Up in .Net land, if you are calling an I/O operation synchronously, someone, somewhere, is waiting on a waithandle for the IOCP to complete before freeing up your thread. By using the Begin/End methods provided in classes like System.IO.FileStream, System.Net.Socket and System.Data.SqlClient.SqlCommand, you start to take advantage of the asynchronicity that’s already plumbed into the Windows kernel, and you can avoid blocking that thread.

So how to get started?

Here’s the simple case: a WCF service with an async operation, the implementation of which calls an async method on an instance field which performs the actual IO operation. We’ll use the same BeginDoWork / EndDoWork signatures we described above:

public class SimpleAsyncService : IMyAsyncService

{

readonly SomeIoClass _innerIoProvider = new SomeIoClass();

public IAsyncResult BeginDoWork(int value, AsyncCallback callback, object state)

{

return _innerIoProvider.BeginDoSomething(value, callback, state);

}

public string EndDoWork(IAsyncResult result)

{

return _innerIoProvider.EndDoSomething(result);

}

}

I said this was simple, yes? All the service has to do to implement the Begin/End methods is delegate to the composed object that performs the underlying async operation.

This works because the only state the service has to worry about – the ‘SomeIoClass’ instance – is already stored on one of its instance fields. It’s the caller’s responsibility (i.e. WCF’s) to call the End method on the same instance that the Begin method was called on, so all our state management is taken care of for us.

Unfortunately it’s not always that simple.

Say the IO operation is something you want to / have to new-up each time it’s invoked, like a SqlCommand that uses one of the parameters (or somesuch). If you just modify the code to create the instance:

public IAsyncResult BeginDoWork(int value, AsyncCallback callback, object state)

{

var ioProvider = new SomeIoClass();

return ioProvider.BeginDoSomething(value, callback, state);

}

…you are instantly in a world of pain. How are you going to implement your EndDoWork method, since you just lost the reference to the SomeIoClass instance you called BeginDoSomething on?

What you really want to do here is something like this:

public IAsyncResult BeginDoWork(int value, AsyncCallback callback, object state)

{

var ioProvider = new SomeIoClass();

return ioProvider.BeginDoSomething(value, callback, ioProvider); // !

}

public string EndDoWork(IAsyncResult result)

{

var ioProvider = (SomeIoClass) result.AsyncState;

return ioProvider.EndDoSomething(result);

}

We’ve passed the ‘SomeIoClass’ as the state parameter on the inner async operation, so it’s available to us in the EndDoWork method by casting from the AsyncResult.AsyncState property. But now we’ve lost the caller’s state, and worse, the IAsyncResult we return to the caller has our state not their state, so they’ll probably blow up. I know I did.

One possible fix is to maintain a state lookup dictionary, but what to key it on? Both the callback and the state may be null, and even if they’re not, there’s nothing to say they have to be unique. You could use the IAsyncResult itself, on the basis that almost certainly is unique, but then there’s a race condition: you don’t get that until after you call the call, by which time the callback might have fired. So your End method may get called before you can store the state into your dictionary. Messy.

Really what you’re supposed to do is implement your own IAsyncResult, since this is only thing that’s guaranteed to be passed from Begin to End. Since we’re not actually creating the real asynchronous operation here (the underlying I/O operation is), we don’t control the waithandle, so the simplest approach seemed to be to wrap the IAsyncResult in such a way that the original caller still sees their expected state, whilst still storing our own.

I’m not sure if there’s another way of doing this, but this is the only arrangement I could make work:

public IAsyncResult BeginDoWork(int value, AsyncCallback callback, object state)

{

var ioProvider = new SomeIoClass();

AsyncCallback wrappedCallback = null;

if (callback!=null)

wrappedCallback = delegate(IAsyncResult result1)

{

var wrappedResult1 = new AsyncResultWrapper(result1, state);

callback(wrappedResult1);

};

var result = ioProvider.BeginDoSomething(value, wrappedCallback, ioProvider);

return new AsyncResultWrapper(result, state);

}

Fsk!

Note that we have to wrap the callback, as well as the return. Otherwise the callback (if present) is called with a non-wrapped IAsyncResult. If you passed the client’s state as the state parameter, then you can’t get your state in EndDoWork(), and if you passed your state then the client’s callback will explode. As will your head trying to follow all this.

Fortunately the EndDoWork() just looks like this:

public string EndDoWork(IAsyncResult result)

{

var state = (AsyncResultWrapper) result;

var ioProvider = (SomeIoClass)state.PrivateState;

return ioProvider.EndDoSomething(result);

}

And for the sake of completeness, here’s the AsyncResultWrapper:

private class AsyncResultWrapper : IAsyncResult

{

private readonly IAsyncResult _result;

private readonly object _publicState;

public AsyncResultWrapper(IAsyncResult result, object publicState)

{

_result = result;

_publicState = publicState;

}

public object AsyncResult

{

get { return _publicState; }

}

public object PrivateState

{

get { return _result.AsyncResult; }

}

// Elided: Delegated implementation of IAsyncResult using composed IAsyncResult

}

By now you are thinking ‘what a mess’, and believe me I am right with you there. It took a bit of trail-and-error to get this working, it’s a major pain to have to implement this for each-and-every nested async call and – guess what – we have lots of them to do. I was pretty sure there must be a better way, but I looked and I couldn’t find one. So I decided to write one, which we’ll cover next time.

Take-home:

Operations that are intrinsically asynchronous – like IO - should be exposed as asynchronous operations, to improve the scalability of your service.

Until .Net 4 comes out, chaining and nesting async operations is a major pain in the arse if you need to maintain any per-call state.

Update: 30/9/2009 – Fixed some typos in the sample code

[1] Incidentally, if you implement both the sync and async forms for a given operation (and keep the Action name for both the same, or the default), it appears the sync version gets called preferentially.