Recently both at work and at home I was faced with the same problem: a PowerShell ‘control’ script that needed to pass parameters down to an arbitrary series of child scripts (i.e. enumerating over scripts in a directory, and executing them in turn).

I needed a way of binding the parameters passed to the child scripts to what was passed to the parent script, and I thought that splatting would be a great fit here. Splatting, if you aren’t aware of it, is a way of binding a hashtable or array to a command’s parameters:

# ie replace this:

dir -Path:C:\temp -Filter:*

# with this:

$dirArgs = @{Filter="*"; Path="C:\temp"}

dir @dirArgs

Note the @ sign on the last line. That’s the splatting operator (yes, its also the hashtable operator as @{}, and the array operator as @(). It’s a busy symbol). It binds $dirArgs to the parameters, rather than attempting to pass $dirArgs as the first positional argument.

So I thought I could just use this to pass any-and-all arguments passed to my ‘master’ script, and get them bound to the child scripts. By name, mind, not by position. That would be bad, because each of the child scripts has different parameters. I want PowerShell to do the heavy lifting of binding the appropriate parameters to the child scripts.

Gotcha #1

I first attempted to splat $args, but I’d forgotten that $args is only the ‘left over’ arguments after all the positional arguments had been taken out. These go into $PSBoundParameters

Gotcha #2

…but only the ones that actually match parameters in the current script/function. Even if you pass an argument to a script in ‘named parameter’ style, like this:

SomeScript.ps1 –someName:someValue

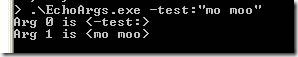

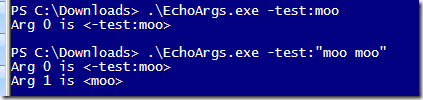

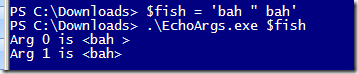

…if there’s no parameter ‘someName’ on that script, this goes into $args as two different items, one being ‘-someName:’ and the next being ‘someValue’. This was surprising. Worse, once the arguments are split up in $args they get splatted positionally, even if they would otherwise match parameters on what’s being called. This seems like a design mistake to me (update: there is a Connect issue for this).

Basically what this meant was that, unless I started parsing $args myself, all the parameters on all the child scripts had to be declared on the parent (or at least all the ones I wanted to splat).

Gotcha #3

Oh, and $PSBoundParameters only contains the named parameters assigned by the caller. Those left unset, i.e. using default values, aren’t in there. So if you want those defaults to propagate, you’ll have to add them back in yourself:

function SomeFunction(

$someValue = 'my default'

){

$PSBoundParameters['someValue'] = $someValue

Very tiresome.

Gotcha #4

$PSBoundParameters gets reset after you dotsource another script, so you need to capture a reference to it before that :-(

Gotcha #5

Just when you thought you were finished, if you’re using [CmdLetBinding] then you’ll probably get an error when splatting, because you’re trying to splat more arguments than the script you’re calling actually has parameters.

To avoid the error you’ll have to revert to a ‘vanilla’ from an ‘advanced’ function, but since [CmdLetBinding] is implied by any of the [Parameter] attributes, you’ll have to remove those too :-( So back to $myParam = $(throw ‘MyParam is required’) style validation, unfortunately.

(Also, if you are using CmdLetBinding, remember to remove any [switch]$verbose parameters (or any others that match the ‘common’ cmdlet parameters), or you’ll get another error about duplicate properties when splatting, since your script now has a –Verbose switch automatically. The duplication only becomes an issue when you splat)

What Did We Learn?

Either: Don’t try this at home.

Or: Capture PSBoundParameters, put the defaults back in, splat it to child scripts not using CmdLetBinding or being ‘advanced functions’

Type your parameters, and put your guard throws back, just in case you end up splatting positionally

Have a lie down