Just in time for the end of 2008 Microsoft finally got

Database Edition 2008 GDR out the door. This replaces the version that came with VS 2008, primarily bringing with it Sql 2008 compatibility.

So GDR is now Microsoft's 3rd bite at integrating the database development workflow into Visual Studio. Lets start with what it does right:

Obviously the main benefit of bringing the database into Visual Studio is you get source control for free. Sure - you've been able to script out your database and add it to source control before, but this way you can check in database changes

and the code that depends on it as one atomic checkin, against a work item. That's actually pretty neat from a tracking / branching point of view.

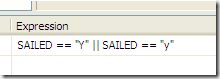

And because it's a fully model-driven system, all the SQL in the project is tested for consistency and correctness at build time. You could do this before of course (script out and build a structure only database to check it builds), but this way you know any problems you find are local to your workspace, so it really was you that broke them.

The output from the build process is a model that completely describes your database schema, so this can be used to sync up test and production databases, either via the IDE, MSBuild or a redistributable command line utility that can be incorporated into your deployment / install process. Not that you couldn't have done all this with

RedGate's SqlComparison SDK of course, but if you struggle to get licences other than MSDN purchased, this does make your life a bit easier, and at least it's been constructed with a build-once-deploy-many philosophy, unlike the clowns behind ClickOnce.

The model also (allegedly) provides a safer platform for database refactoring: it's supposed to 'remember' things like column renames

as renames and treat them as such the next time a database is sync'd to the model. I guess only time will tell how well that works in the real world given, for example, multiple databases at different schema versions (ie Prod database 5 versions behind Test etc… ). Are the refactorings going to be journalled away and somehow replayed in the right order? If so, how does it know at which version to start? It's not a simple matter.

But Microsoft has still totally, totally missed the point, because there is still no design support. Not one. Nada. Not even a schema browser.

Hey I can see where this is going, and I can see they're laying foundations here, but in my mind the fundamentals of a database development tool are that they assist in database development. You know - the core stuff: creating objects and sprocs. Source control and a deployment workflow are secondary to that. But with GDR you'd better be hankering after the Sql 6.5 days, because you're going to be writing lots and lots of TSQL. The best I can say is it's colour coded, but

didn't we only just get IntelliSense in Management Studio? It just doesn't work for me.

But the alternative - do your dev / design in Management Studio and sync back to the model - is equally flawed, if only because you've got to keep syncing back again (and the sync UI is still a bit ragged). That's a whole lot of friction for relatively nebulous benefits (especially if you already have your database schema under some level of source control), and in my mind questions the whole utility of the product. What's the point of 'integrating' into the Visual Studio IDE if you have to keep tabbing out to do anything even remotely useful (think: execution plans)?

Similarly, whilst there's a Data Compare feature, it's not actually part of your database project, just something you do on an ad-hoc basis. So there's no easy way of designating a certain subset of tables as 'reference data' that should essentially be considered part of the schema, rather than part of the contents, and sync'd up when you redeploy. I'll accept that's probably a vNext feature, but its absence calls into question the viability of using GDR as an end-to-end database deployment manager in the first place, compared to tools like

SqlPackager (or scripting using

SqlCompare \

SqlDataCompare APIs).

And of course the model parser isn't perfect, so occasionally a valid bit of SQL creates build errors because the model can't fathom what it's doing. In my case it can't seem to resolve the columns in a CTE that queries another (properly referenced) database, and barfs about ambiguous references where there are none. Unfortunately fidelity is always the problem with Model Driven Architectures, even ones that use a DSL as the model, because they have to - at some point - cater for a lowest common denominator, and at any rate they are in an arms race with whatever it is they're modelling. Microsoft is touting it's extensible architecture, and IBM are apparently writing a DB2 provider, but what do you think the chances of 100% functionality coverage are? And for Oracle? I'll wager pretty low. So be prepared to take an 80/20 view, and put some bits into pre/post build scripts and / or re-structure them to keep the parser happy.

Then there are the little things. It creates a schema and object hierarchy when your database is initially imported, but it doesn't enforce it, and the Add \ New (whatever) templates don't pick up on it, so all your new objects end up in the wrong schema unless you're concentrating. If you do anything with any system views you'll need to reference the master database ( %ProgramFiles%\Microsoft Visual Studio 9.0\VSTSDB\Extensions\SqlServer\2008\DBSchemas), so why is it not referenced to start with? And the doco's not been updated for RTM so contains all kinds of outdated information around the command line interfaces, and absolutely no content in the API section other than listing the namespaces.

http://social.msdn.microsoft.com/Forums/en-US/vstsdb/thread/3f0f0888-9b37-4279-81d4-b924595630a8/http://social.msdn.microsoft.com/Forums/en-US/vstsdb/thread/a966ac3c-c039-4c3f-9b6d-2882d9874282So this still strikes me as neither fish nor fowl. Given the designers already exist in Management Studio it's a bit pisspoor that the DataDude team haven't borrowed them yet. I imagine I'll still be doing most of my work in Management Studio, and at best attempting to keep the database model in sync. And I suspect that for me, and many others, this will be just too much friction to bother with and so the whole effort will be more or less wasted.

Which is all a bit sad, given it's 2009, I've written a decades worth of database-centric apps, and we've still not got this right. At least no-one's actually paying for this any more.